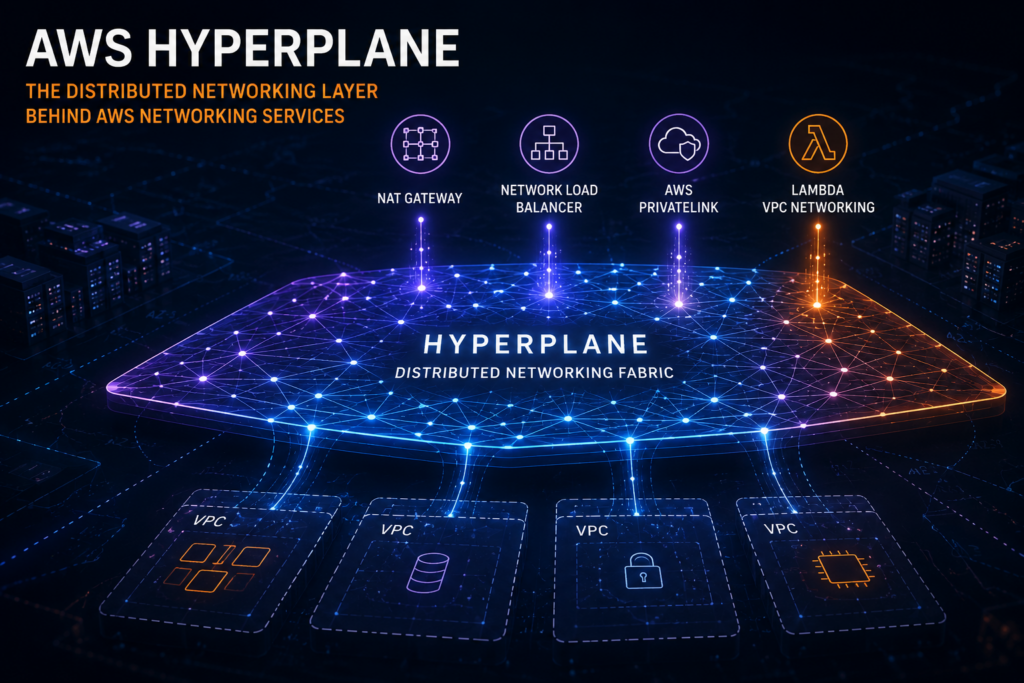

Most AWS users interact with Hyperplane almost every day without realizing it.

Create a AWS NAT Gateway?

You are likely interacting with Hyperplane infrastructure.

Deploy a Network Load Balancer?

Hyperplane again.

Use AWS PrivateLink?

Still Hyperplane.

It is one of the most important pieces of AWS infrastructure that customers never directly see, yet it quietly powers some of the most scalable networking services in the cloud.

What makes Hyperplane particularly interesting is that it reflects a much bigger shift inside AWS:

networking stopped being tied to appliances and became distributed software running at hyperscale.

And once you understand that idea, a lot of AWS networking design suddenly starts making much more sense.

AWS had a scaling problem

Traditional networking models relied heavily on:

- appliances

- centralized traffic processing

- fixed scaling boundaries

- individual devices handling traffic

That approach becomes difficult at hyperscale.

As AWS grew, it became increasingly inefficient to build every networking service independently. Instead of creating separate platforms for:

- NAT

- load balancing

- private connectivity

AWS built a shared distributed networking layer underneath many of these services.

That layer became Hyperplane.

The key idea behind Hyperplane

Most people think services like NAT Gateway or NLB are standalone products.

Architecturally, they are closer to:

interfaces sitting on top of a distributed networking platform.

The service you create in the console is mostly:

- configuration

- policy

- abstraction

Much of the underlying traffic processing and network virtualization is then handled by Hyperplane-based infrastructure.

That design helps explain why AWS networking services can:

- scale extremely fast

- survive failures transparently

- distribute traffic across an entire region

- behave more like distributed systems than traditional appliances

How Hyperplane changed AWS networking

Traditionally, networking infrastructure looked something like this:

Client → Load Balancer → Server

Or:

Private Subnet → NAT Instance → Internet

These models introduced operational overhead:

- patching

- scaling

- failover management

- throughput limitations

Hyperplane changed that model significantly.

Instead of relying entirely on fixed appliances, AWS introduced distributed systems capable of handling:

- packet processing

- NAT

- connection tracking

- traffic distribution

- failover

across fleets of infrastructure inside an AWS region.

Networking increasingly became distributed software.

NAT Gateway makes more sense once you understand Hyperplane

Before managed NAT Gateway existed, many AWS environments relied on NAT instances.

Private Subnet

│

▼

NAT Instance

│

▼

Internet

It worked, but it created bottlenecks and operational complexity.

Today, when you create a NAT Gateway, AWS abstracts away most of that operational burden through distributed infrastructure that relies heavily on Hyperplane concepts and architecture.

From the customer perspective:

- create gateway

- update routes

- done

Behind the scenes, AWS manages:

- scaling

- failover

- connection distribution

- infrastructure resilience

without exposing that complexity to customers.

NLB is not “just a load balancer”

A lot of people still imagine Network Load Balancer as a single appliance sitting in front of instances.

That is not really how modern AWS networking operates.

NLB relies on Hyperplane-based infrastructure for scalable traffic distribution and connection handling.

Conceptually, it looks closer to this:

Client

│

▼

Distributed Networking Layer

│

▼

Targets

This helps explain why NLB can provide:

- very low latency

- fast scaling

- massive connection volumes

- rapid failover

The customer sees:

Load Balancer

AWS operates:

Distributed networking infrastructure

Those are very different architectural models.

PrivateLink becomes much easier to understand

AWS PrivateLink is another excellent example of Hyperplane concepts in practice.

Without PrivateLink, private connectivity between environments often requires:

- VPC peering

- Transit Gateway

- route management

- CIDR coordination

With Hyperplane acting as part of the underlying connectivity layer, AWS can abstract much of that complexity away.

Conceptually:

Consumer VPC

│

Interface Endpoint

│

Distributed AWS Networking Layer

│

Provider Service

The customer experience becomes significantly simpler even though the underlying infrastructure remains highly sophisticated.

That pattern appears repeatedly across AWS:

complexity moves inward into the platform.

Hyperplane also influenced Lambda networking

Older Lambda VPC integrations were known for slow cold starts caused partly by ENI creation and attachment overhead.

AWS later introduced Hyperplane-based networking improvements to reduce ENI-related scaling and cold-start limitations.

The result included:

- faster startup times

- better scaling behaviour

- reduced networking overhead

Again, the broader architectural pattern is what matters most:

AWS increasingly centralizes networking capabilities into shared distributed systems.

Hyperplane reflects how AWS thinks about infrastructure

One of the most interesting things about AWS architecture is that the company rarely thinks purely in terms of individual servers or appliances.

Instead, AWS increasingly builds:

- distributed systems

- regional software layers

- shared infrastructure fabrics

Hyperplane is a strong example of that philosophy.

Customers interact with:

- APIs

- abstractions

- managed services

while AWS operates large-scale distributed systems underneath.

Final thoughts

Hyperplane is one of the foundational technologies behind modern AWS networking.

It quietly supports services such as:

- AWS NAT Gateway

- Network Load Balancer

- AWS PrivateLink

- parts of AWS Lambda networking

Most customers never directly interact with it.

But once you understand the ideas behind Hyperplane, many AWS networking services stop feeling like isolated magic and start making much more architectural sense.

Leave a Reply